MAGISTER - AI

Agents Execution Platform

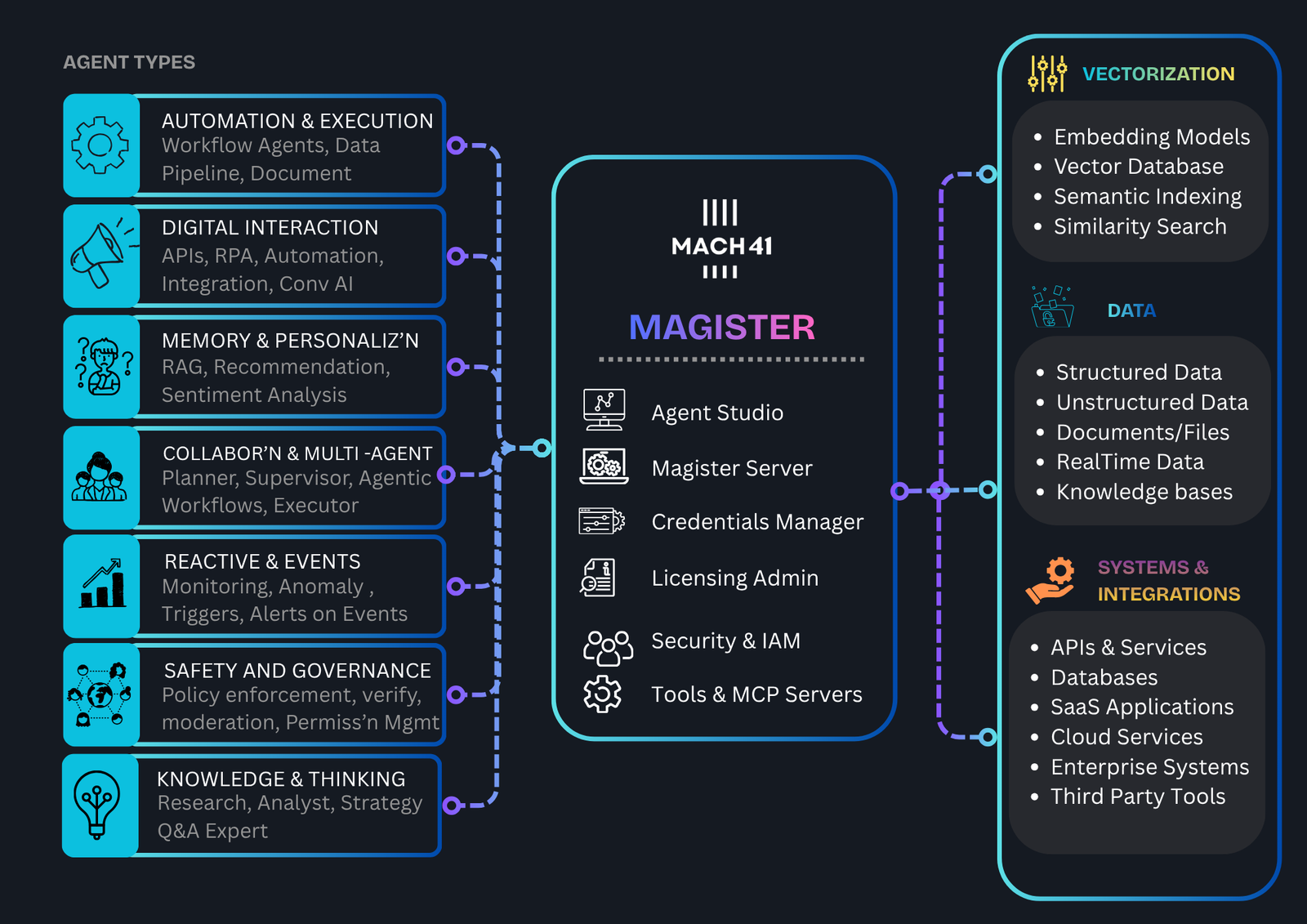

Enterprise focused platform for "Building" & "Running" AI agents. Governed execution layer with built-in Controls & NFRs, Cloud Ready & Ready for Production.

Magister is a Enterprise ready Operating System for AI agents - with a Low code IDE for developing Agents with Natural Language + embedded Integration tooklit. Build & Host AI Agents with confidence.